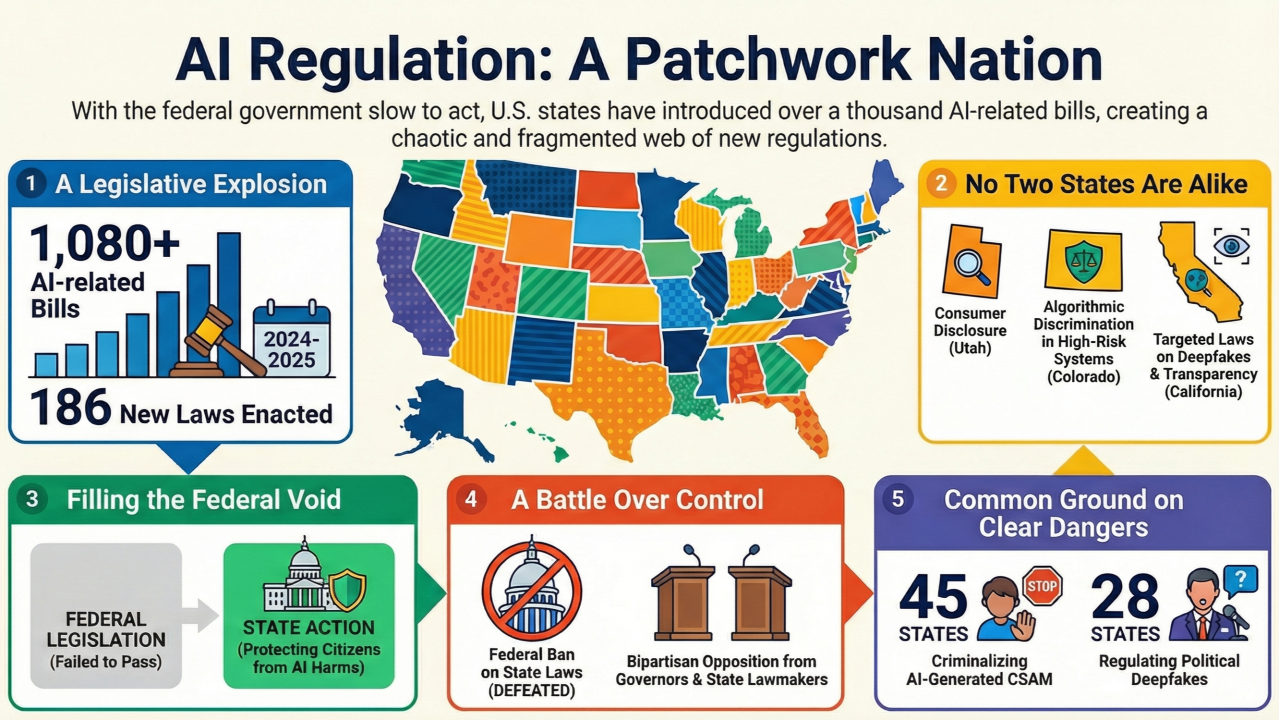

Texas TRAIGA has been in effect since January 1, 2026. Colorado's AI Act takes effect June 30, 2026. Illinois, Virginia, Connecticut, and at least twenty other states have introduced AI governance legislation in the past eighteen months — at various stages of committee review, floor consideration, or executive signature.

Congress has declined twice to preempt state AI laws. The Senate voted 99 to 1 to strip a ten-year preemption moratorium from the One Big Beautiful Bill Act. The same moratorium was excluded from the 2025 National Defense Authorization Act. A December 2025 executive order directing the DOJ to challenge state AI laws has not yet produced any filed actions against enacted state legislation.

The practical result for businesses operating across state lines is a compliance landscape that cannot be managed through a single federal framework because that framework does not exist. State-by-state AI compliance is the reality of 2026, and the pace of new legislation shows no sign of slowing.

The Two Dominant Legal Theories

State AI laws fall into two broad camps, and the difference between them matters substantially for compliance strategy.

Texas TRAIGA represents the intent-based approach. The law prohibits AI systems deployed with the intent to manipulate, discriminate, or harm. It creates specific documentation requirements and establishes a NIST AI RMF safe harbor for businesses that align their AI governance with that federal framework. Demonstrating reasonable care — including documented vendor relationships and human oversight — is the core compliance posture.

Colorado's SB 24-205 represents the impact-based approach. The law focuses on whether AI could produce algorithmic discrimination regardless of intent. It requires annual impact assessments for each high-risk AI system, documented risk management policies, consumer disclosure, and a meaningful appeal process. Colorado's requirements are more procedurally demanding than TRAIGA's for businesses that use AI in consequential decisions.

Other states are choosing between these two models and variations of them. Virginia has moved toward the impact-based approach. Illinois has extended its biometric privacy framework toward AI. The pattern suggests that the two-model distinction will persist and deepen rather than converge toward a single national standard.

Who Is Actually Affected

The most common misconception about state AI governance laws is that they apply primarily to technology companies and AI developers. Both TRAIGA and the Colorado AI Act apply primarily to deployers — businesses using AI-powered platforms in consequential decisions.

That means the hiring manager who uses Indeed to sort applicants, the landlord who uses SmartMove to screen tenants, and the lender who uses AI-assisted underwriting software are all deployers under these laws. The AI developers who built those platforms have their own compliance obligations, but the deployer obligations fall on businesses that may never have written a line of code.

As more states adopt similar frameworks, the pool of affected businesses expands to include virtually any company that uses modern software in processes that affect employment, housing, credit, healthcare, or access to services.

The Federal Outlook

The March 2026 White House National Policy Framework for Artificial Intelligence recommends that Congress pass comprehensive federal AI legislation. The Framework is not binding. Congress has a full legislative calendar with no comprehensive federal AI bill ready for a floor vote. The most likely outcome in 2026 is continued state-level activity without federal resolution.

Businesses that are building AI compliance programs in 2026 should build them to satisfy the most demanding applicable state law — currently Colorado's — with flexibility to accommodate additional state requirements as they emerge. Building to a federal standard that does not yet exist is not a viable compliance strategy.

This article is for informational purposes and does not constitute legal advice.